Intro

Some of the most visited posts on this blog relate to dependency injection in .NET. As you may know, dependency injection has been baked in in ASP.Net almost since the beginning, but it culminated with the MVC framework and the .Net Core rewrite. Dependency injection has been separated into packages from where it can be used everywhere. However, probably because they thought it was such a core concept or maybe because it is code that came along since the days of UnityContainer, the entire mechanism is sealed, internalized and without any hooks on which to add custom code. Which, in my view, is crazy, since dependency injection serves, amongst other things, the purpose of one point of change for class instantiations.

Now, to be fair, I am not an expert in the design patterns used in dependency injection in the .NET code. There might be some weird way in which you can extend the code that I am unaware of. In that case, please illuminate me. But as far as I went in the code, this is the simplest way I found to insert my own hook into the resolution process. If you just want the code, skip to the end.

Using DI

First of all, a recap on how to use dependency injection (from scratch) in a console application:

// you need the nuget packages Microsoft.Extensions.DependencyInjection

// and Microsoft.Extensions.DependencyInjection.Abstractions

using Microsoft.Extensions.DependencyInjection;

...

// create a service collection

var services = new ServiceCollection();

// add the mappings between interface and implementation

services.AddSingleton<ITest, Test>();

// build the provider

var provider = services.BuildServiceProvider();

// get the instance of a service

var test = provider.GetService<ITest>();

Note that this is a very simplified scenario. For more details, please check Creating a console app with Dependency Injection in .NET Core.

Recommended pattern for DI

Second of all, a recap of the recommended way of using dependency injection (both from Microsoft and myself) which is... constructor injection. It serves two purposes:

- It declares all the dependencies of an object in the constructor. You can rest assured that all you would ever need for that thing to work is there.

- When the constructor starts to fill a page you get a strong hint that your class may be doing too many things and you should split it up.

But then again, there is the "Learn the rules. Master the rules. Break the rules" concept. I've familiarized myself with it before writing this post so that now I can safely break the second part and not master anything before I break stuff. I am talking now about property injection, which is generally (for good reason) frowned upon, but which one may want to use in scenarios adjacent to the functionality of the class, like logging. One of the things that always bothered me is having to declare a logger in every constructor ever, even if in itself a logger does nothing to the functionality of the class.

So I've had this idea that I would use constructor dependency injection EVERYWHERE, except logging. I would create an ILogger<T> property which would be automatically injected with the correct implementation at resolution time. Only there is a problem: Microsoft's dependency injection does not support property injection or resolution hooks (as far as I could find). So I thought of a solution.

How does it work?

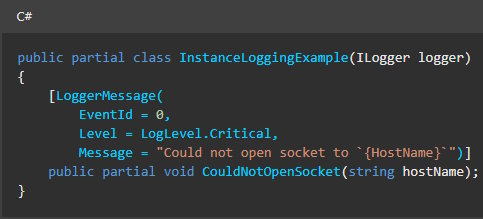

Third of all, a small recap on how ServiceProvider really works.

When one does services.BuildServiceProvider() they actually call an extension method that does new ServiceProvider(services, someServiceProviderOptions). Only that constructor is internal, so you can't use it yourself. Then, inside the provider class, the GetService method is using a ConcurrentDictionary of service accessors to get your service. In case the service accessor is not there, the method from the field _createServiceAccessor is going to be used. So my solution: replace the field value with a wrapper that will also execute our own code.

The solution

Before I show you the code, mind that this applies to .NET 7.0. I guess it will work in most .NET Core versions, but they could change the internal field name or functionality in which case this might break.

Finally, here is the code:

public static class ServiceProviderExtensions

{

/// <summary>

/// Adds a custom handler to be executed after service provider resolves a service

/// </summary>

/// <param name="provider">The service provider</param>

/// <param name="handler">An action receiving the service provider,

/// the registered type of the service

/// and the actual instance of the service</param>

/// <returns>the same ServiceProvider</returns>

public static ServiceProvider AddCustomResolveHandler(this ServiceProvider provider,

Action<IServiceProvider, Type, object> handler)

{

var field = typeof(ServiceProvider).GetField("_createServiceAccessor",

BindingFlags.Instance | BindingFlags.NonPublic);

var accessor = (Delegate)field.GetValue(provider);

var newAccessor = (Type type) =>

{

Func<object, object> newFunc = (object scope) =>

{

var resolver = (Delegate)accessor.DynamicInvoke(new[] { type });

var resolved = resolver.DynamicInvoke(new[] { scope });

handler(provider, type, resolved);

return resolved;

};

return newFunc;

};

field.SetValue(provider, newAccessor);

return provider;

}

}

As you can see, we take the original accessor delegate and we replace it with a version that runs our own handler immediately after the service has been instantiated.

Populating a Logger property

And we can use it like this to do property injection now:

static void Main(string[] args)

{

var services = new ServiceCollection();

services.AddSingleton<ITest, Test>();

var provider = services.BuildServiceProvider();

provider.AddCustomResolveHandler(PopulateLogger);

var test = (Test)provider.GetService<ITest>();

Assert.IsNotNull(test.Logger);

}

private static void PopulateLogger(IServiceProvider provider,

Type type, object service)

{

if (service is null) return;

var propInfo = service.GetType().GetProperty("Logger",

BindingFlags.Instance|BindingFlags.Public);

if (propInfo is null) return;

var expectedType = typeof(ILogger<>).MakeGenericType(service.GetType());

if (propInfo.PropertyType != expectedType) return;

var logger = provider.GetService(expectedType);

propInfo.SetValue(service, logger);

}

See how I've added the PopulateLogger handler in which I am looking for a property like

public ILogger<Test> Logger { get; private set; }

(where the generic type of ILogger is the same as the class) and populate it.

Populating any decorated property

Of course, this is kind of ugly. If you want to enable property injection, why not use an attribute that makes your intention clear and requires less reflection? Fine. Let's do it like this:

// Add handler

provider.AddCustomResolveHandler(InjectProperties);

...

// the handler populates all properties that are decorated with [Inject]

private static void InjectProperties(IServiceProvider provider, Type type, object service)

{

if (service is null) return;

var propInfos = service.GetType()

.GetProperties(BindingFlags.Instance | BindingFlags.Public)

.Where(p => p.GetCustomAttribute<InjectAttribute>() != null)

.ToList();

foreach (var propInfo in propInfos)

{

var instance = provider.GetService(propInfo.PropertyType);

propInfo.SetValue(service, instance);

}

}

...

// the attribute class

[AttributeUsage(AttributeTargets.Property, AllowMultiple = false, Inherited = true)]

public class InjectAttribute : Attribute {}

Conclusion

I have demonstrated how to add a custom handler to be executed after any service instance is resolved by the default Microsoft ServiceProvider class, which in turn enables property injection, one point of change to all classes, etc. I once wrote code to wrap any class into a proxy that would trace all property and method calls with their parameters automatically. You can plug that in with the code above, if you so choose.

Be warned that this solution is using reflection to change the functionality of the .NET 7.0 ServiceProvider class and, if the code there changes for some reason, you might need to adapt it to the latest functionality.

If you know of a more elegant way of doing this, please let me know.

Hope it helps!

Bonus

But what about people who really, really, really hate reflection and don't want to use it? What about situations where you have a dependency injection framework running for you, but you have no access to the service provider builder code? Isn't there any solution?

Yes. And No. (sorry, couldn't help myself)

The issue is that ServiceProvider, ServiceCollection and all that jazz are pretty closed up. There is no solution I know of that solved this issue. However... there is one particular point in the dependency injection setup which can be hijacked and that is... the adding of the service descriptors themselves!

You see, when you do ServiceCollection.AddSingleton<Something,Something>, what gets called is yet another extension method, the ServiceCollection itself is nothing but a list of ServiceDescriptor. The Add* extensions methods come from ServiceCollectionServiceExtensions class, which contains a lot of methods that all defer to just three different effects:

- adding a ServiceDescriptor on a type (so associating an type with a concrete type) with a specific lifetime (transient, scoped or singleton)

- adding a ServiceDescriptor on an instance (so associating a type with a specific instance of a class), by default singleton

- adding a ServiceDescriptor on a factory method (so associating a type with a constructor method)

If you think about it, the first two can be translated into the third. In order to instantiate a type using a service provider you do ActivatorUtilities.CreateInstance(provider, type) and a factory method that returns a specific instance of a class is trivial.

So, the solution: just copy paste the contents of ServiceCollectionServiceExtensions and make all of the methods end up in the Add method using a service factory method descriptor. Now instead of using the extensions from Microsoft, you use your class, with the same effect. Next step: replace the provider factory method with a wrapper that also executes stuff.

Since this is a bonus, I let you implement everything except the Add method, which I will provide here:

// original code

private static IServiceCollection Add(

IServiceCollection collection,

Type serviceType,

Func<IServiceProvider, object> implementationFactory,

ServiceLifetime lifetime)

{

var descriptor = new ServiceDescriptor(serviceType, implementationFactory, lifetime);

collection.Add(descriptor);

return collection;

}

//updated code

private static IServiceCollection Add(

IServiceCollection collection,

Type serviceType,

Func<IServiceProvider, object> implementationFactory,

ServiceLifetime lifetime)

{

Func<IServiceProvider, object> factory = (sp)=> {

var instance = implementationFactory(sp);

// no stack overflow, please

if (instance is IDependencyResolver) return instance;

// look for a registered instance of IDependencyResolver (our own interface)

var resolver=sp.GetService<IDependencyResolver>();

// intercept the resolution and replace it with our own

return resolver?.Resolve(sp, serviceType, instance) ?? instance;

};

var descriptor = new ServiceDescriptor(serviceType, factory, lifetime);

collection.Add(descriptor);

return collection;

}

All you have to do is (create the interface and then) register your own implementation of IDependencyResolver and do whatever you want to do in the Resolve method, including the logger instantiation, the inject attribute handling or the wrapping of objects, as above. All without reflection.

The kick here is that you have to make sure you don't use the default Add* methods when you register your services, or this won't work.

There you have it, bonus content not found on dev.to ;)